gpt-image-2: Inside OpenAI's First Reasoning Image Model

OpenAI's gpt-image-2 is the first AI image model that reasons before it draws. Launched on April 21, 2026 as part of ChatGPT Images 2.0, it combines near-perfect text rendering, 2K resolution via API, multilingual output across Japanese, Korean, Chinese, Hindi and Bengali, and the ability to generate up to eight coherent images from a single prompt. For creative teams that have spent three years fighting AI generators over typography, UI mockups and infographics, this is the release that moves image generation from party trick to production tool.

Two things separate gpt-image-2 from every image model that came before it. First, the new Thinking mode applies the same reasoning architecture behind GPT-5 to visual generation — the model plans compositions, searches the web for real-time context, runs multiple drafts, and verifies its own output before returning. Second, it renders text at near-100% accuracy in LM Arena blind tests, roughly three to five times faster than Google's Nano Banana Pro.

Here is the full breakdown: what shipped, how it performs against the competition, what it costs, and how to actually use it in a creative workflow this week.

What Is gpt-image-2

gpt-image-2 is OpenAI's new flagship image generation model. In consumer ChatGPT it is branded as ChatGPT Images 2.0; in the API and Codex it exposes as gpt-image-2 (with chatgpt-image-latest as the parity alias). The model replaces gpt-image-1.5 as the default and is the successor to the GPT-4o image engine that powered viral Studio Ghibli stylisations last year.

It shipped on April 21, 2026 across four surfaces simultaneously:

- ChatGPT (Free, Plus, Pro, Business, Enterprise)

- OpenAI API — model ID

gpt-image-2 - Codex, OpenAI's coding environment

- Microsoft Azure AI Foundry

Basic Instant generation is available on every ChatGPT plan including the free tier. The advanced Thinking mode and the multi-image reasoning features are gated behind ChatGPT Plus, Pro and Business.

Two Modes, One Model

This is the structural change most writeups bury. gpt-image-2 runs in two distinct modes that trade latency for quality:

| Mode | Speed | Output | Plan | Best For |

|---|---|---|---|---|

| Instant | ~3 seconds | 1 image | Free, Plus, Pro, Business | Social content, quick ideation, high-volume production |

| Thinking | 15–60 seconds | Up to 8 coherent images | Plus, Pro, Business | Campaigns, slide decks, infographics, typography, brand kits |

Instant mode is the successor to the GPT-4o image experience — fast, cheap, good. Thinking mode is the headline: it applies OpenAI's reasoning stack to image generation for the first time. When you engage it, the model decomposes the brief, can pull real-time information from the web, drafts candidate compositions, self-critiques, and returns a coherent set of up to eight images from a single prompt.

"Images are a language, not decoration. A good image does what a good sentence does." — OpenAI, announcing gpt-image-2

What Changed: The Five Things That Matter

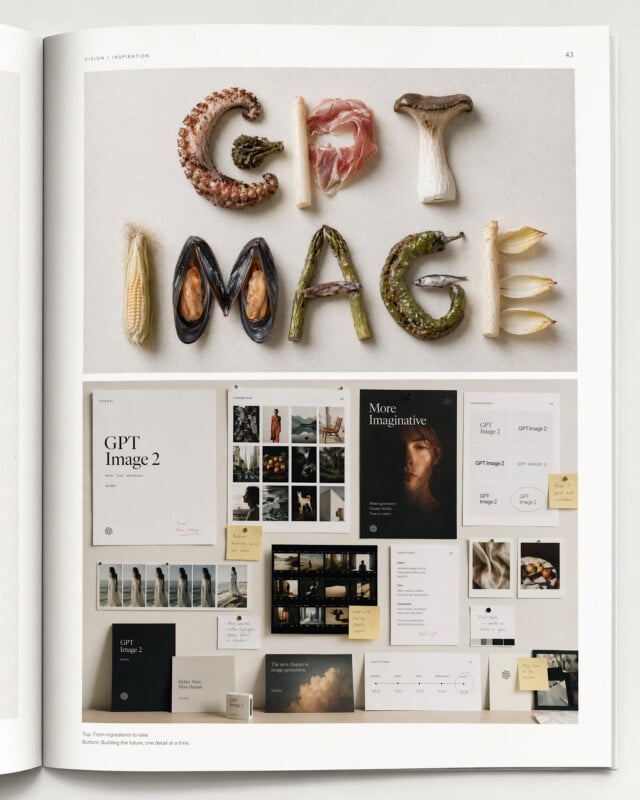

1. Near-Perfect Text Rendering

For three years, text has been the visible weak spot of every AI image model. Signs read as nonsense. Logos became runes. Labels on a product shot turned into melted Cyrillic. gpt-image-2 closes that gap in a single release.

Independent LM Arena blind tests put text-rendering accuracy near 100% — significantly ahead of Nano Banana Pro, which itself was already the 2025 leader. Testers have noted that the gap between gpt-image-2 and Nano Banana Pro on typography is roughly the same size as the gap between Nano Banana Pro and the original DALL·E.

What this unlocks in practice:

- Slides and decks generated end-to-end with real titles, bullets, and attribution

- Infographics with accurate numbers, legends and call-outs

- UI mockups with real button labels, menus and navigation

- Product packaging with legible ingredients, nutrition panels, barcodes

- Editorial graphics with headlines, pull quotes and captions

- Maps with readable place names and cartographic labels

If you previously kept an AI model for imagery and a separate text-overlay step in Figma or Photoshop, you can now collapse that into a single gpt-image-2 prompt for most marketing assets.

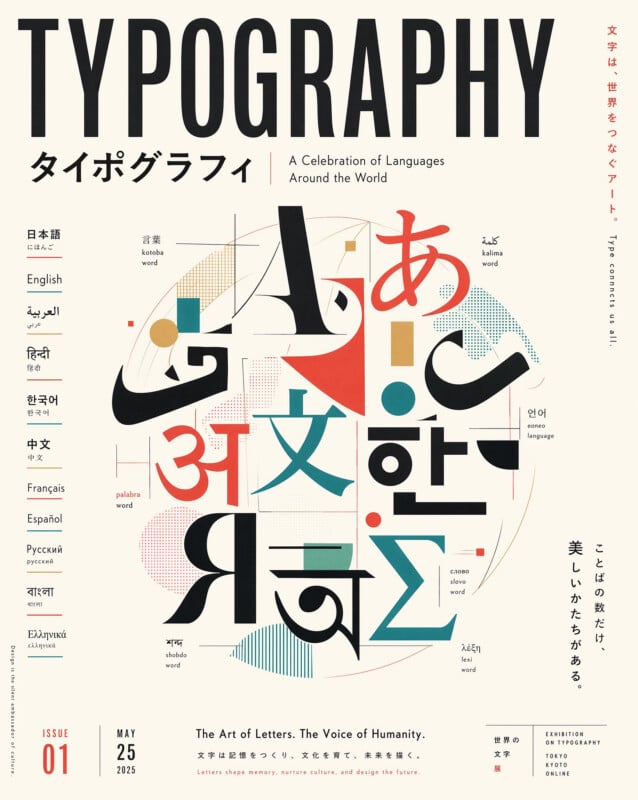

2. Real Multilingual Support

gpt-image-2 substantially extends non-Latin script support. OpenAI explicitly highlighted gains in Japanese, Korean, Chinese (Simplified and Traditional), Hindi and Bengali. For global brands this has been the single largest blocker to using AI image generation in-market — not creativity, but the inability to render a Japanese product label without a native speaker laughing at the output.

The model now handles dense mixed-script layouts (e.g., an e-commerce promo with Korean headline, English CTA, and Japanese disclaimer) without breaking typography.

3. 2K Resolution via API

Through the API, gpt-image-2 outputs up to 2048×2048 (2K) — up from 1024×1024 in the prior generation. More importantly, it now supports flexible aspect ratios from 3:1 wide to 1:3 tall, covering banners, mobile stories, posters and social formats natively without post-crop.

For creative teams this removes one of the last reasons to go straight to Midjourney or Nano Banana for hero art: you can now generate final-resolution assets in the same pipeline as your copy and reasoning calls.

4. Up to Eight Coherent Images Per Prompt

This is the feature that will actually change how creative teams brief work. In Thinking mode, gpt-image-2 can return up to eight distinct images from a single prompt — coherent with each other in style, subject and composition, not random variations.

In practice that means:

- A full campaign sheet (6 ad sizes) from one brief

- A 6–8 frame storyboard that keeps the same character and lighting

- A set of platform-tailored exports (feed, story, thumbnail, carousel) in one pass

- A brand kit with matching illustration, pattern and texture variants

"With both the intelligence of OpenAI's reasoning models and a vast understanding of the visual world, this model moves image generation from rendering to strategic design." — OpenAI

5. Reasoning With Real-Time Web Access

When run through a thinking or Pro model, gpt-image-2 can browse the live web during generation. Ask it to "draw this week's top 5 AI video launches as an infographic," and it will pull real data, design a coherent chart, and render a cited graphic in a single request.

This capability — knowledge-grounded image generation — was pioneered by ByteDance's Seedream 5.0 and extended by Nano Banana Pro with Google Search grounding. gpt-image-2 is the first OpenAI model to ship it natively, and it pairs more cleanly with ChatGPT's existing research workflows than either competitor.

gpt-image-2 vs Nano Banana Pro vs Seedream 5.0: Honest Comparison

This is where most blog posts turn into marketing. Here is the version that respects your time.

| Capability | gpt-image-2 | Nano Banana Pro | Seedream 5.0 |

|---|---|---|---|

| Text rendering | Near-100% (best) | Expert | Strong |

| Speed (Instant) | ~3 seconds | 10–15 seconds | ~7 seconds |

| Max resolution | 2K (API) | 4K | 4K |

| Multi-image per prompt | Up to 8 | 1 | 1 |

| Reference images | Limited | 14 (10 object + 4 char) | 10 |

| Hyper-realistic portraits | Strong | Best | Strong |

| Multilingual non-Latin | Best | Strong (Chinese leader) | Strong |

| Reasoning / Thinking mode | Native | Configurable | Native |

| Real-time web knowledge | Yes | Yes (Google) | Yes |

| SynthID / watermarking | Opt-in | Yes | No |

| Copyright indemnification | Standard | Yes | No |

| Ecosystem tooling | API, Codex, Azure | Photoshop, Figma | ComfyUI, API |

| Base API price (1K, medium) | ~$0.053 | $0.134 | ~$0.06 |

How to read this table: gpt-image-2 wins where text, speed, and campaign-volume output matter. Nano Banana Pro wins where reference-heavy portraiture and enterprise risk posture (watermarking, indemnification, Creative Cloud integration) matter. Seedream wins where price-per-4K-image and open tooling matter.

For a deeper methodology on how these comparisons are run — and why we refuse to pick a single "winner" — see our definitive guide to the best AI image generators of 2026.

Pricing: What You'll Actually Pay

Through the API, gpt-image-2 uses tiered per-image pricing at 1024×1024 resolution:

| Quality tier | Price per image | Typical use |

|---|---|---|

| Low | ~$0.006 | Thumbnails, previews, rapid iteration |

| Medium | ~$0.053 | Social posts, web graphics, standard marketing |

| High | ~$0.211 | Hero art, print, campaign finals |

Pricing scales with resolution and aspect ratio. As of launch week, some developer accounts still route through the gpt-image-1.5 billing path while OpenAI completes the rollout — budget owners should verify the exact line item after 48 hours of usage.

For ChatGPT users the calculus is simpler:

- Free tier: Instant mode, standard daily cap, Images 2.0 included

- Plus ($20/mo): Instant + Thinking, higher caps, multi-image sets

- Pro ($200/mo): Thinking at highest reasoning tier, uncapped-ish usage, priority queue

- Business/Enterprise: Admin controls, SSO, data governance, custom caps

Unlike Sora, which was sunset in March 2026 along with its API, gpt-image-2 shipped through Codex and Azure AI Foundry on day one. The contrast signals that OpenAI is re-prioritising programmatic access for image over video — a meaningful pivot for anyone building on top of its stack.

What This Means For The Image Model Wars

Six months ago, the consensus order in the image space was: Nano Banana Pro on top, Seedream 5.0 close behind, Flux for artistic work, Midjourney for aesthetics, and GPT Image as the also-ran that only won on text. That order is now scrambled.

Three shifts to internalise:

Reasoning is the new benchmark. Seedream, Nano Banana Pro and gpt-image-2 all now reason about compositions before generating. Static, one-shot diffusion models are quickly becoming the low end of the market. If your tooling doesn't expose a "thinking" tier, you are on borrowed time.

Text rendering is solved — for the leaders. What used to be a differentiator is now table stakes. The interesting question has shifted from "can it render text?" to "can it render my brand's typography, in Japanese, on a vertical phone screen, in under 10 seconds?" That eliminates 60% of the market overnight.

Speed is back in play. gpt-image-2's 3-second Instant mode collapses the latency gap between "model I use for ideation" and "model I ship to production." For the first time, a single model is fast enough for whiteboarding and good enough for finals.

For a view of how this sits next to the other big shift of 2026 — reasoning-native models like Seedream — see our breakdown of Seedream 5.0 Lite and visual reasoning and the full picture on Google's response with Nano Banana 2.

A Practical Playbook: How Creative Teams Should Use It This Week

gpt-image-2 is not a drop-in replacement for any existing model. It is a new default for specific jobs. Here is the allocation most creative teams will land on by the end of Q2 2026:

Use gpt-image-2 (Instant) for

- Social posts with copy baked in (avoids the Figma round-trip)

- Slide content generation inside Codex / ChatGPT

- UI mockups, wireframes and dashboard screenshots

- Multilingual campaign variants where non-Latin typography was previously a blocker

- High-volume iteration on concepts before committing to a hero

Use gpt-image-2 (Thinking) for

- Campaign kits — 6 ad sizes, 8 platform exports, one brief

- Storyboards and sequential frames that must keep character identity

- Infographics where the data has to be current and correct

- Brand kits: matching patterns, textures, illustrations, icons

- Any asset that used to require 3+ rounds of back-and-forth

Keep Nano Banana Pro for

- Hyper-realistic portraiture and celebrity-likeness work

- Reference-heavy workflows (6+ references, brand consistency over 14 assets)

- Anything shipping into regulated industries that require indemnification

- Photoshop and Figma native integrations

Keep Seedream for

- 4K final exports at aggressive price-per-image

- ComfyUI pipelines and open tooling

- Chinese-market first campaigns

Keep a dedicated artistic model (Midjourney / Flux) for

- Editorial illustration and mood work

- Cinematic lighting and painterly aesthetics

- Anything where "wrong but beautiful" beats "right but literal"

Do not consolidate onto a single image model. Every team we've watched standardise on one vendor has rebuilt a multi-model workflow within 90 days. The economics and quality frontier shift too fast — monthly, not annually.

How To Access gpt-image-2

gpt-image-2 is live across five surfaces today:

- ChatGPT (web, desktop, mobile) — Instant mode for all; Thinking mode on Plus/Pro/Business

- OpenAI API — model ID

gpt-image-2(orchatgpt-image-latestfor parity with ChatGPT) - Codex — native integration inside OpenAI's coding environment

- Microsoft Azure AI Foundry — enterprise-grade routing with your Microsoft contract

- XainFlow — available in Flow Studio alongside Nano Banana 2, Seedream 5.0 Lite, Recraft v3, Flux and others

Quick Start (API)

from openai import OpenAI

client = OpenAI()

response = client.images.generate(

model="gpt-image-2",

prompt="A minimalist SaaS dashboard mockup, blue accent, clean sans-serif labels reading 'Revenue', 'Active Users', 'Churn'",

size="1024x1024",

quality="medium",

n=1

)

For Thinking mode and multi-image sets, route through the Responses API with reasoning: { effort: "high" } and n up to 8. OpenAI's image endpoints require one-time API Organization Verification on most accounts — complete that first or your calls will silently drop back to gpt-image-1.5.

Use It Inside XainFlow

Inside XainFlow's Flow Studio, gpt-image-2 plugs into any workflow node as an image source. A typical multi-model pipeline looks like this:

- Research node — Claude or GPT-5 pulls brief + references

- gpt-image-2 (Thinking) — generates 6 coherent campaign variants

- Nano Banana Pro — regenerates hero shot with 14-reference consistency

- Upscale + Background Remover — finalises deliverables

- Asset library — publishes to the project, tagged with brand variables

That is the difference between "I used an image model today" and "my team shipped a 40-asset campaign before lunch."

The Bottom Line

gpt-image-2 is the most important image model release of 2026 so far, because it does three things no single prior model did simultaneously: it reasons, it writes text accurately at speed, and it returns coherent multi-image sets from one prompt. Individually, each capability existed elsewhere. Together, they compress a creative workflow that used to span five tools and three rounds into a single call.

It is not the end of Nano Banana Pro, Seedream, Flux or Midjourney. It is the end of the era where you could get away with one image model. The best creative teams of the next 12 months will be the ones that orchestrate four or five — and that is exactly what modern AI workflow platforms are built for.

XainFlow runs gpt-image-2 alongside Nano Banana 2, Seedream 5.0 Lite, Recraft v3, Flux, and a full video-model stack in a single workspace. Build a multi-model pipeline once, run it as many times as your team ships. Explore Flow Studio →

Frequently Asked Questions

What is gpt-image-2?

gpt-image-2 is OpenAI's new flagship image generation model, launched on April 21, 2026 as part of ChatGPT Images 2.0. It is the first image model to integrate reasoning capabilities — a 'Thinking' mode where the model plans and verifies images before finalizing output — alongside near-perfect text rendering, 2K resolution via the API, multilingual support for Japanese, Korean, Chinese, Hindi and Bengali, and the ability to generate up to eight coherent images from a single prompt.

Is gpt-image-2 better than Nano Banana Pro?

It depends on the task. In independent LM Arena blind tests, gpt-image-2 leads Nano Banana Pro in text rendering (near-100% accuracy), UI rendering, world knowledge, and speed (~3 seconds vs. 10–15 seconds). Nano Banana Pro still wins in multi-reference consistency with up to 14 reference images, hyper-realistic portraiture, the Photoshop/Figma ecosystem, SynthID watermarking, and copyright indemnification. For text-heavy designs, UI mockups and slides, use gpt-image-2. For portraits and complex reference compositions, Nano Banana Pro remains the better pick.

How much does gpt-image-2 cost?

gpt-image-2 uses tiered per-image pricing at 1024×1024: approximately $0.006 (low quality), $0.053 (medium quality), and $0.211 (high quality). Instant mode is included for all ChatGPT users — including the free tier — and for Codex users. The advanced Thinking mode and multi-image reasoning features are restricted to ChatGPT Plus, Pro and Business subscribers. The API is also rolling out through Microsoft Azure AI Foundry.

What is the difference between Instant and Thinking mode?

Instant mode produces images in roughly 3 seconds using the base model — ideal for rapid ideation, social content and high-volume production. Thinking mode, available on Plus/Pro/Business plans, runs gpt-image-2 through an extended reasoning loop: the model analyses the brief, searches the web for real-time information when needed, generates multiple candidate compositions, verifies its own output, and returns up to 8 coherent variants from a single prompt. Use Thinking mode for campaign kits, slide decks, infographics and typography-heavy designs.

Can I use gpt-image-2 in XainFlow?

Yes. XainFlow already supports the OpenAI gpt-image family in its image-model lineup alongside Nano Banana 2 (gemini-3.1-flash-image-preview), Seedream 5.0 Lite, Recraft v3, Flux variants, and more. Inside Flow Studio you can build multi-model workflows — for example, use gpt-image-2 for text-heavy hero assets, Nano Banana Pro for hyper-realistic product shots, and Seedream for knowledge-grounded compositions, all in a single pipeline.